Student Data Privacy Checklist for AI Tools

Student Data Privacy Checklist for AI Tools

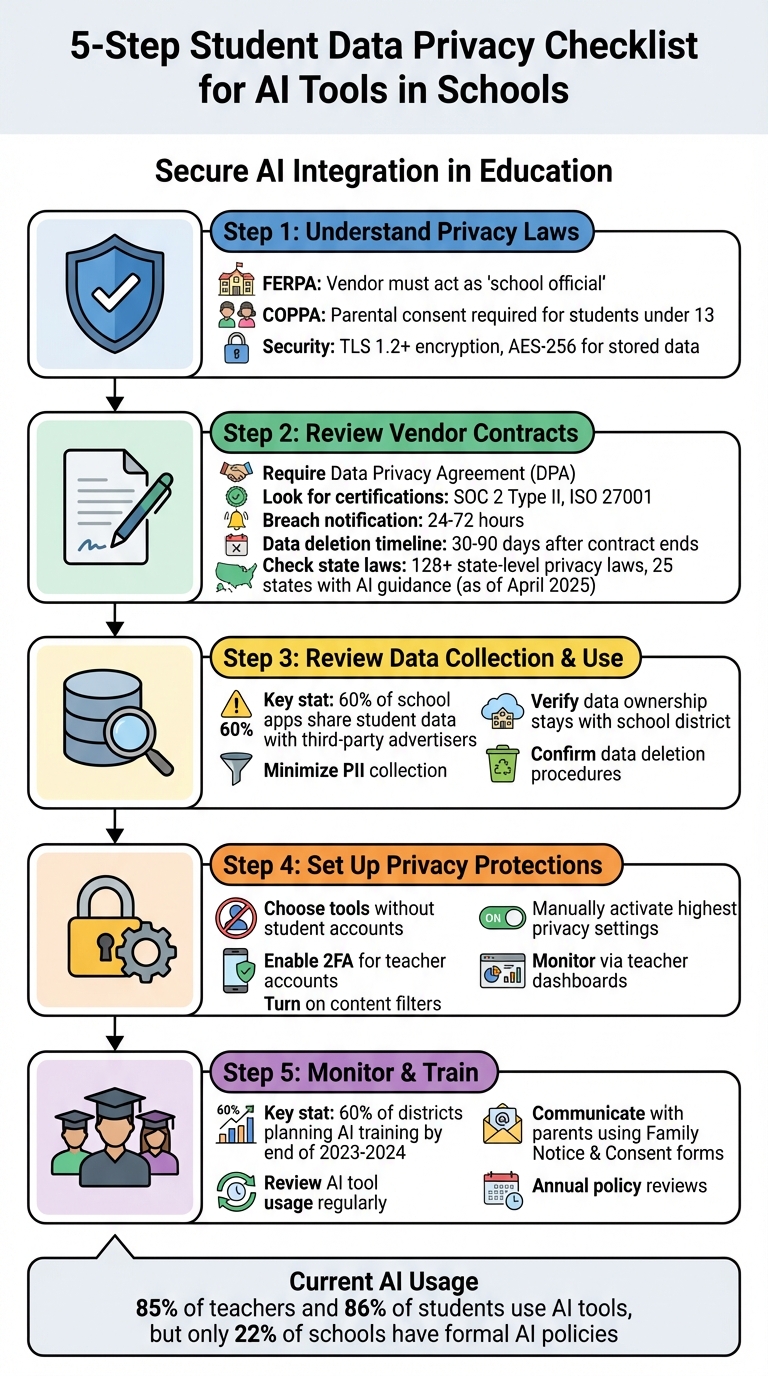

AI tools are increasingly common in classrooms, but they come with privacy risks. Schools must ensure these tools comply with laws like FERPA, COPPA, and PPRA to safeguard student data. Key steps include:

- Understand Privacy Laws: Verify compliance with federal and state regulations. For example, COPPA requires parental consent for students under 13.

- Review Vendor Contracts: Ensure vendors sign agreements that restrict data use, like prohibiting AI training with student data.

- Minimize Data Collection: Remove personally identifiable information (PII) before using AI tools.

- Set Up Privacy Protections: Use tools that don’t require student accounts, enable strong access controls, and activate content filters.

- Train Staff: Educators should learn how to securely use AI tools and communicate privacy practices to parents.

5-Step Student Data Privacy Checklist for AI Tools in Schools

Student Data Privacy & AI: Safe Practices for Educators

sbb-itb-97b6c2e

How to Check AI Tools for Privacy Compliance

When introducing new AI tools into your school environment, it's essential to ensure they comply with privacy laws at both the federal and state levels. This not only protects your students but also keeps your institution on the right side of legal obligations.

Understand the Privacy Laws

Start by verifying that the tool adheres to key federal privacy laws like FERPA, COPPA, and PPRA:

- FERPA: The vendor must act as a "school official" under your district's direct control. This means they can only use student data for approved educational purposes.

- COPPA: For students under 13, the tool must require explicit parental consent for any non-educational use of their data.

Additionally, ask vendors to outline their security practices. The tool should use TLS 1.2 or higher for secure data transmission and AES-256 encryption for stored data. If the tool uses student information - like prompts or generated content - to train its AI model, confirm that this data is de-identified and that your school has the option to opt out of such practices.

Once you've confirmed these basics, it's time to dive into the vendor contracts.

Review Vendor Contracts

Before rolling out any AI tool, require the vendor to sign a Data Privacy Agreement (DPA). This agreement should:

- Designate the vendor as a "school official" under FERPA.

- Specify that your district retains ownership of all student data, not the vendor.

- Clearly prohibit the use of student personally identifiable information for training or improving AI models.

Look for independent certifications, such as SOC 2 Type II or ISO 27001, which demonstrate the vendor's commitment to security standards. The contract should also include clear terms for breach notifications (within 24–72 hours) and data deletion timelines (within 30–90 days after the contract ends).

To streamline this process, many schools rely on the Student Data Privacy Consortium's National DPA template, which helps standardize requirements across vendors.

Finally, ensure the tool complies with any additional state-specific privacy laws.

Account for State Privacy Laws

Federal regulations are just one part of the puzzle. Schools must also navigate over 128 state-level student privacy laws. As of April 2025, 25 states - including Alabama, California, Connecticut, Ohio, Washington, and Wisconsin - have issued specific guidance for using AI in K-12 education.

For state-specific rules, consult resources like the Future of Privacy Forum's "Summary of State AI Guidance". Check if the tool is on district or state-approved lists or if it has passed evaluations like the CoSN K-12 Gen AI Readiness Checklist.

For accessibility, ask vendors for a VPAT to confirm ADA compliance. Larger districts serving 50,000+ people must meet these standards by April 24, 2026, while smaller districts have until April 24, 2027.

Review How AI Tools Collect and Use Student Data

After confirming that an AI tool complies with legal standards, it's crucial to dig deeper into what data it collects and how that data is used. During the 2024–25 school year, 85% of teachers and 86% of students were using AI tools, yet research reveals that 60% of school apps share student data with third-party advertising platforms. This highlights the importance of transparency in data practices. Once legal compliance is verified, take a closer look at how these tools handle sensitive information.

Check What Personal Information Is Collected

AI tools often gather various types of data, including personally identifiable information (PII), academic performance metrics, behavioral patterns, learning analytics, communication records, and assistive technology data. PII can range from direct identifiers like names, email addresses, student IDs, and photos to indirect identifiers such as Social Security numbers, parent names, and home addresses.

A notable case occurred in January 2022 when the Federal Trade Commission acted against Illuminate Education for poor security practices. These lapses, such as storing data in plain text, led to a breach exposing the personal information of over 10.1 million students, including email addresses, birth dates, and even health-related details. Additionally, predictive algorithms have shown racial bias, misclassifying Black and Hispanic students at rates of 19% and 21%, respectively.

To reduce risks, prioritize data minimization. This means asking vendors if learning insights can be derived from de-identified or aggregated data instead of raw PII. If you're experimenting with a tool that hasn’t been approved by your district, ensure all PII is removed or anonymized before inputting it into the system.

Review Data Usage Policies

Understanding how vendors use student data is just as crucial as knowing what they collect. Look for a written agreement that designates the vendor as a "school official" under FERPA, which restricts data use for purposes like advertising or AI model training. If student information - such as prompts or generated content - contributes to training the AI, there’s a higher risk that PII could inadvertently appear in future outputs.

Check whether the vendor shares data with third-party AI providers or subprocessors. Some tools include settings to disable data usage for model training, but these privacy controls often need to be manually activated. Ensure the contract explicitly states that your school district retains full ownership of all student data and any AI-generated outputs.

Once you’ve clarified data collection and usage, focus on ensuring that proper deletion protocols are in place.

Confirm Data Deletion Procedures

Having clear data deletion procedures is essential when accounts are closed or contracts expire. Vendors should provide written retention schedules specifying how long student data is stored during and after the contract period. Your agreement should also grant the school district the authority to request immediate deletion of student data.

Request sample audit logs or certificates of destruction to verify how vendors document the removal of PII. Ensure that deletion processes cover not only active databases but also backup copies and archived data. Before data is deleted, confirm that the tool allows you to export student work in a portable format, such as a PDF, to avoid losing important records.

Set Up Privacy Protections for AI Tools

Once you've identified what data an AI tool collects and how it’s used, the next step is putting practical safeguards in place. With 30% of teachers using AI weekly but only 22% of schools having formal AI policies, many educators are left to navigate these challenges on their own. This step builds on your understanding of data collection practices by actively reducing risks during everyday use.

Choose Tools That Don't Require Student Accounts

One of the easiest ways to protect student privacy is by choosing AI tools that don’t require students to create accounts or share personal information. Tools that avoid collecting personally identifiable information (PII) also help schools steer clear of complex privacy law requirements. Look for platforms where students can join sessions using teacher-controlled codes or “Spaces” instead of individual logins.

Take Twin Pics as an example. This platform allows teachers to set up classrooms with unique join codes, letting students participate in daily AI-based image generation challenges without needing accounts or sharing any personal details. Its COPPA-compliant design ensures that teachers can focus on teaching skills like prompt engineering and critical thinking about AI-generated content while maintaining privacy. The platform also includes strict content filters and works on any device, making it ideal for K-12 classrooms.

Make sure the tool you select doesn’t require student PII or store outputs permanently.

Set Up Access Controls

Even if students don’t need accounts, teachers still need to secure their own access to AI tools. Start with strong passwords and enable two-factor authentication (2FA) for teacher accounts managing these platforms.

It’s also a good idea to use tools governed by district contracts. These agreements often ensure compliance with national and state privacy laws while giving districts control over data. Before introducing any new tool, consult your school’s IT or educational technology department for a list of pre-approved platforms. Choose tools that offer teacher-facing activity logs and real-time dashboards to monitor student interactions with the AI.

Don’t forget to manually adjust privacy settings to their highest level. Many tools allow you to disable the use of student input data for training AI models, but these settings often need to be activated manually. If you’re uploading student work for AI-assisted tasks like grading or feedback, remove all personal details. Replace names with aliases or random identifiers that cannot be traced back to individuals.

Turn On Content Filters and Monitor Use

Once access is secure, focus on filtering and monitoring to reduce potential privacy risks. Content filters are a key defense against inappropriate AI outputs. Before students use any AI tool, configure the platform’s settings to define the level of assistance provided. Adjust these filters based on your instructional goals.

"Uses of AI should always start with human inquiry and always end with human reflection, human insight, and human empowerment." - Washington State guidance

Select tools that offer teacher-facing dashboards with real-time visibility into how students interact with the AI. These dashboards let you review questions students ask and the guidance they receive. Monitoring these interactions helps ensure AI is supplementing, not replacing, genuine learning. Consider setting up safety alerts that notify you of any conversations or outputs that raise compliance or safety concerns.

With 35% of teachers citing data privacy as the biggest barrier to adopting AI tools, proper monitoring can help address many of these worries. Regularly review AI outputs for potential biases - research indicates some AI systems display biases based on student names alone. Establish a clear process for reporting inaccuracies or biased results to both your administration and the vendor.

Monitor and Train for Privacy Protection

Setting up privacy measures is just the beginning. Privacy standards are constantly evolving as AI tools introduce new features, regulations change, and classroom practices shift. With 60% of school districts planning to provide teacher training on AI by the end of the 2023-2024 school year, educators are realizing that staying ahead means consistently monitoring and updating their practices to safeguard student data. This ongoing effort ensures that privacy measures keep pace with advancements in AI.

Review AI Tool Usage Regularly

Privacy protection isn't a one-and-done task. Regularly reviewing the AI tools used in classrooms is essential to ensure they continue to meet privacy standards. Check teacher-facing activity logs to see how students interact with AI tools and to identify any misuse, like using them as shortcuts rather than learning aids. After updates, confirm that privacy settings remain intact and perform an "inclusion audit" every few weeks to spot potential biases related to race, ethnicity, or gender.

If a tool is no longer in use or its privacy practices become unclear, cross-check its deletion protocols to ensure student data is securely removed. Verify that tools remain on your district's approved list and that data protection agreements are still valid. Large school districts (50,000+ people) must also meet new ADA web accessibility standards for digital tools by April 24, 2026, with smaller districts following by April 24, 2027.

Maintain a workflow of "generate, evaluate, adapt, deliver", where every AI-generated recommendation or piece of content is reviewed by a teacher before it reaches students. This human oversight is critical for catching privacy or ethical issues early.

Train Teachers on Privacy Practices

While establishing privacy settings is important, teacher training ensures these standards are upheld over time. Training should focus on understanding AI - how it works, its limitations, ethical concerns, and how to critically evaluate AI-generated content. By January 2025, 26 U.S. states will have official AI guidance for schools, and 8 states emphasize professional development on responsible AI use and data privacy.

Teachers need to understand that personally identifiable information (PII) includes names, IDs, grades, and attendance records in an AI context. Training should highlight data minimization techniques, such as replacing names with aliases or using placeholders like "Learner A" when entering data into AI tools.

"Students and staff will be given support to develop their AI literacy, which includes the knowledge, skills, and attitudes associated with how artificial intelligence works... as well as how to use artificial intelligence, such as its limitations, implications, and ethical considerations." - TeachAI

Provide teachers with templates for "Classroom AI Norms" and "Family Notice & Consent" forms to simplify communication with parents and other stakeholders. Additionally, a "Data Minimization Checklist" can help teachers assess each prompt: "Do I need names? Do I need full context?". Given how quickly AI evolves, review AI usage policies and training programs at least once a year.

Communicate with Parents and Administrators

Privacy protection also depends on clear communication with parents and administrators. Transparency builds trust and ensures that everyone understands how AI is being used. State privacy laws often require schools to disclose what student data is shared and who receives it. Create a straightforward Family Notice & Consent form that explains how AI tools are used, what data is entered, how it’s protected, and offers an opt-out option for families.

Share "Classroom AI Norms" in plain language with parents and students. These norms should clarify when AI is used, what it assists with, and its limitations (e.g., "AI drafts; teacher decides"). Ensure new tools are approved through pre-vetted lists or signed agreements. When introducing AI tools, explain their specific tasks - such as lesson planning, providing feedback, or creating materials - so administrators can assess potential risks.

Being transparent about AI usage models responsible behavior for students and encourages them to disclose their own use of AI tools. Use AI transparency pages from edtech companies to help parents understand how specific tools handle data and make decisions. For situations where AI tools are involved in significant decision-making that could impact students, consider requiring explicit parental consent or ensuring a "human in the loop" is always present.

Conclusion

Protecting student data privacy when using AI tools requires thoughtful planning and consistent action. Start by vetting tools for compliance: check if they're on district-approved lists, review their data protection agreements, and confirm that vendors don’t use student data for AI training. These steps establish a solid foundation for safeguarding sensitive information.

Next, minimize data exposure by removing personally identifiable information (like names, IDs, or grades) from AI inputs. Even small adjustments like these can significantly reduce risks.

Strong privacy protections are also essential. Opt for tools that don’t require student accounts, activate robust privacy settings, and ensure teachers oversee all AI-generated outputs. For instance, Twin Pics sets a strong example by eliminating the need for student accounts and implementing strict content filters. Its compliance with COPPA allows teachers to focus on teaching AI literacy while maintaining student safety.

Finally, ongoing monitoring and training are critical to keeping up with technological and regulatory changes. Regularly review activity logs, verify that privacy settings remain secure after updates, and train educators on effective data minimization practices. These efforts create a safer and more privacy-conscious classroom environment.

"AI technology holds immense promise in enhancing educational experiences for students, but it must be implemented responsibly and ethically." - David Sallay, Director for Youth & Education Privacy, Future of Privacy Forum

FAQs

What counts as student PII in AI prompts?

Student Personally Identifiable Information (PII) refers to any details that can be used to identify an individual student. This includes names, grades, or other sensitive data. Protecting this information is crucial to avoid breaches of privacy and to comply with laws governing student data privacy. Neglecting these safeguards could lead to serious violations and unintended consequences.

Do teachers need parent consent to use an AI tool?

Teachers generally don't need to seek parent consent for using AI tools in the classroom, as long as those tools adhere to privacy laws and regulations. That said, it's crucial to carefully review district policies and confirm that the tools have been properly evaluated to ensure they meet legal standards and protect student data privacy.

What contract terms should a school require from an AI vendor?

Schools need to ensure AI vendors provide clear terms regarding data privacy, compliance with laws such as FERPA and COPPA, and strong data protection protocols. Contracts should outline key elements like parental consent requirements (when applicable), liability and indemnity clauses, and clear commitments on how data is handled and secured. These measures are essential for protecting student information and holding vendors accountable.